savecontext

💡 Summary

SaveContext is a local-first MCP server that provides AI coding assistants with persistent memory and context management.

🎯 Target Audience

🤖 AI Roast: “Powerful, but the setup might scare off the impatient.”

Risk: Critical. Review: shell/CLI command execution; outbound network access (SSRF, data egress); API keys/tokens handling and storage; filesystem read/write scope and path traversal. Run with least privilege and audit before enabling in production.

SaveContext

The OS for AI coding agents

Overview

SaveContext is a Model Context Protocol (MCP) server that gives AI coding assistants persistent memory across sessions. It combines context management, issue tracking, and project planning into a single local-first tool that works with any MCP-compatible client.

Core capabilities:

- Context & Memory — Save decisions, progress, and notes that persist across conversations

- Issue Tracking — Manage tasks, bugs, and epics with dependencies and hierarchies

- Plans & PRDs — Create specs and link them to implementation issues

- Semantic Search — Find past decisions by meaning, not just keywords

- Checkpoints — Snapshot and restore session state at any point

🛠️ Features

- Local Semantic Search: AI-powered search using Ollama or Transformers.js for offline embedding generation

- Multi-Agent Support: Run multiple CLI/IDE instances simultaneously with agent-scoped session tracking

- Automatic Provider Detection: Detects 30+ MCP clients including coding tools (Claude Code, Cursor, Cline, VS Code, JetBrains, etc.) and desktop apps (Claude Desktop, Perplexity, ChatGPT, Raycast, etc.)

- Session Lifecycle Management: Full session state management with pause, resume, end, switch, and delete operations

- Multi-Path Sessions: Sessions can span multiple related directories (monorepos, frontend/backend, etc.)

- Project Isolation: Automatically filters sessions by project path - only see sessions from your current repository

- Auto-Resume: If an active session exists for your project, automatically resume it instead of creating duplicates

- Session Management: Organize work by sessions with automatic channel detection from git branches

- Checkpoints: Create named snapshots of session state with optional git status capture

- Checkpoint Search: Lightweight keyword search across all checkpoints with project/session filtering to find historical decisions

- Smart Compaction: Analyze priority items and generate restoration summaries when approaching context limits

- Channel System: Automatically derive channels from git branches (e.g.,

feature/auth→feature-auth) - Local Storage: SQLite database with WAL mode for fast, reliable persistence

- Cross-Tool Compatible: Works with any MCP-compatible client (Claude Code, Cursor, Factory, Codex, Cline, etc.)

- Fully Offline: No cloud account required, all data stays on your machine

- Plans System: Create PRDs and specs, link issues to plans, track implementation progress

- Dashboard UI: Local Next.js web interface for visual session, context, and issue management

📦 Installation

Prerequisites

Bun is required - SaveContext uses bun:sqlite for optimal performance:

# Install Bun (macOS, Linux, WSL) curl -fsSL https://bun.sh/install | bash

Using bunx (Recommended)

bunx @savecontext/mcp

Global Install

bun install -g @savecontext/mcp

From source (Development)

git clone https://github.com/greenfieldlabs-inc/savecontext.git cd savecontext/server bun install bun run build

⚡️ Quick Start

Get started with SaveContext in under a minute:

# 1. Install Bun (if not already installed) curl -fsSL https://bun.sh/install | bash # 2. Add to your AI tool's MCP config

Add this to your MCP configuration (Claude Code, Cursor, etc.):

{ "mcpServers": { "savecontext": { "command": "bunx", "args": ["@savecontext/mcp"] } } }

That's it! Your AI assistant now has persistent memory across sessions.

"bunx not found" — GUI apps (Claude Desktop) and some terminals (Ghostty, tmux) don't inherit your shell's PATH.

Fix: Use the full path to bunx and include PATH in env:

{ "mcpServers": { "savecontext": { "command": "/Users/YOUR_USERNAME/.bun/bin/bunx", "args": ["@savecontext/mcp"], "env": { "PATH": "/Users/YOUR_USERNAME/.bun/bin:/usr/local/bin:/opt/homebrew/bin:/usr/bin:/bin" } } } }

Find your bun path with: which bunx

Common locations:

- macOS/Linux:

~/.bun/bin/bunx - Homebrew:

/opt/homebrew/bin/bunx - npm global:

/usr/local/bin/bunx

"Module not found" error — If you see Module not found "/opt/homebrew/bin/../dist/index.js" or similar, you have a cached older version.

Fix: Clear the bunx cache:

rm -rf ~/.bun/install/cache/@savecontext* bunx @savecontext/mcp@latest --version # Should show 0.1.27+

Optional: Install Ollama for AI-powered semantic search:

ollama pull nomic-embed-text

📊 Dashboard

A local web interface for visual session, context, memory, plan, and issue management.

# Run the dashboard (starts on port 3333) bunx @savecontext/dashboard # Or specify a custom port bunx @savecontext/dashboard -p 4000

Requires Bun: Install with

curl -fsSL https://bun.sh/install | bash

Features:

- Projects View: See all projects with session counts

- Sessions: Browse sessions, view context items, manage checkpoints

- Memory: View and manage project memory (commands, configs, notes)

- Issues: Track tasks, bugs, features with Linear-style interface

- Plans: Create and manage PRDs/specs linked to issues

Note: The dashboard reads from the same SQLite database as the MCP server (

~/.savecontext/data/savecontext.db).

git clone https://github.com/greenfieldlabs-inc/savecontext.git cd savecontext/dashboard bun install bun dev # runs on port 3333 bun dev -p 4000 # or specify a custom port

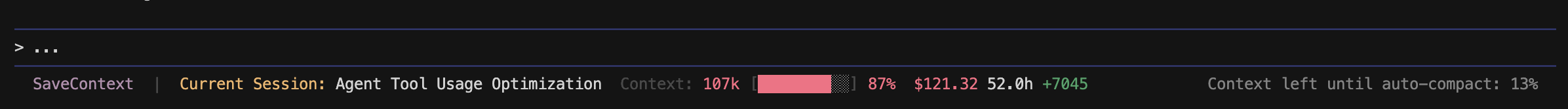

Status Line

Display real-time SaveContext session info directly in your terminal.

| Tool | Status | |------|--------| | Claude Code | Supported (native statusline) | | OpenCode | Coming soon (tmux integration) | | Other CLI tools | Coming soon (tmux integration) |

Note: IDE-based tools like Cursor, Windsurf, VS Code, etc. show MCP connection status in their own UI - this terminal status line feature is for CLI-based tools.

What You See

| Metric | Description | |--------|-------------| | Session Name | Your current SaveContext session | | Context | Token count + visual progress bar + percentage | | Cost | Running cost for the session | | Duration | How long the session has been active | | Lines | Net lines changed (+/-) |

Quick Setup

# Auto-detect and configure Claude Code bunx @savecontext/mcp@latest --setup-statusline # Or specify the tool explicitly bunx @savecontext/mcp@latest --setup-statusline --tool claude-code # Remove statusline configuration bunx @savecontext/mcp@latest --uninstall-statusline

Then restart Claude Code. That's it.

Claude Code

Claude Code has native statusline support. The setup script:

- Installs

statusline.pyto~/.savecontext/ - Installs

update-status-cache.pyhook to~/.savecontext/hooks/ - Updates

~/.claude/settings.jsonwith statusline and hook configuration

Requires Python 3.x - see Troubleshooting if Python isn't detected.

How It Works

┌─────────────────┐

│ Claude Code │

│ (PostToolUse │

│ Python hook) │

└────────┬────────┘

│

▼

┌────────────────────────────────────┐

│ ~/.savecontext/status-cache/ │

│ (shared JSON cache) │

└────────────────┬───────────────────┘

│

▼

┌─────────────────┐

│ statusline.py │

│ (Claude native) │

└─────────────────┘

- Hook Intercepts MCP Responses: When SaveContext tools execute, a PostToolUse Python hook writes session info to a local cache file (

~/.savecontext/status-cache/<key>.json) - Status Display Reads Cache: Claude Code's native statusline runs

statusline.pyon each prompt to read from the cache - Terminal Isolation: Each terminal instance gets its own cache key, so multiple windows show their own sessions without overlap

Cross-Platform Support

The statusline scripts automatically detect your platform and terminal to generate a unique session key:

| Platform | Detection Method | Example Key |

|----------|------------------|-------------|

| Windows Terminal | WT_SESSION env var | wt-abc123-def |

| ConEmu/Cmder | ConEmuPID env var | conemu-12345 |

| Windows CMD/PS | SESSIONNAME + PPID | win-Console-1234 |

| WSL | Kernel contains "microsoft" | wt-* or wslpid-* |

| macOS Terminal.app | TERM_SESSION_ID | term-abc123 |

| iTerm2 | ITERM_SESSION_ID | iterm-xyz789 |

| GNOME Terminal | GNOME_TERMINAL_SERVICE | gnome-12345 |

| Konsole | KONSOLE_DBUS_SESSION | konsole-12345 |

| Kitty | KITTY_PID | kitty-12345 |

| Tilix | TILIX_ID | tilix-session-1 |

| Alacritty | ALACRITTY_SOCKET | alacritty-12345 |

| TTY (SSH, etc.) | ps -o tty= command | tty-pts_0 |

| Fallback | Parent process ID | linuxpid-*, macpid-*, winpid-* |

Manual Override: Set SAVECONTEXT_STATUS_KEY environment variable to use a custom key:

ex

Pros

- Offers persistent memory for AI assistants

- Integrates context management and issue tracking

- Supports multiple coding tools and environments

- Fully offline with no cloud dependency

Cons

- Requires Bun for installation

- May have a learning curve for new users

- Limited to MCP-compatible clients

- Potential issues with GUI app compatibility

Related Skills

pytorch

S“It's the Swiss Army knife of deep learning, but good luck figuring out which of the 47 installation methods is the one that won't break your system.”

agno

S“It promises to be the Kubernetes for agents, but let's see if developers have the patience to learn yet another orchestration layer.”

nuxt-skills

S“It's essentially a well-organized cheat sheet that turns your AI assistant into a Nuxt framework parrot.”

Disclaimer: This content is sourced from GitHub open source projects for display and rating purposes only.

Copyright belongs to the original author greenfieldlabs-inc.